2026-03-06

在Grafana上关联Traces和Logs

我想要通过Grafana来实现类似Langsmith、Langfuse这类Agent观测平台的效果,核心需求是能看到LLM的消息内容。

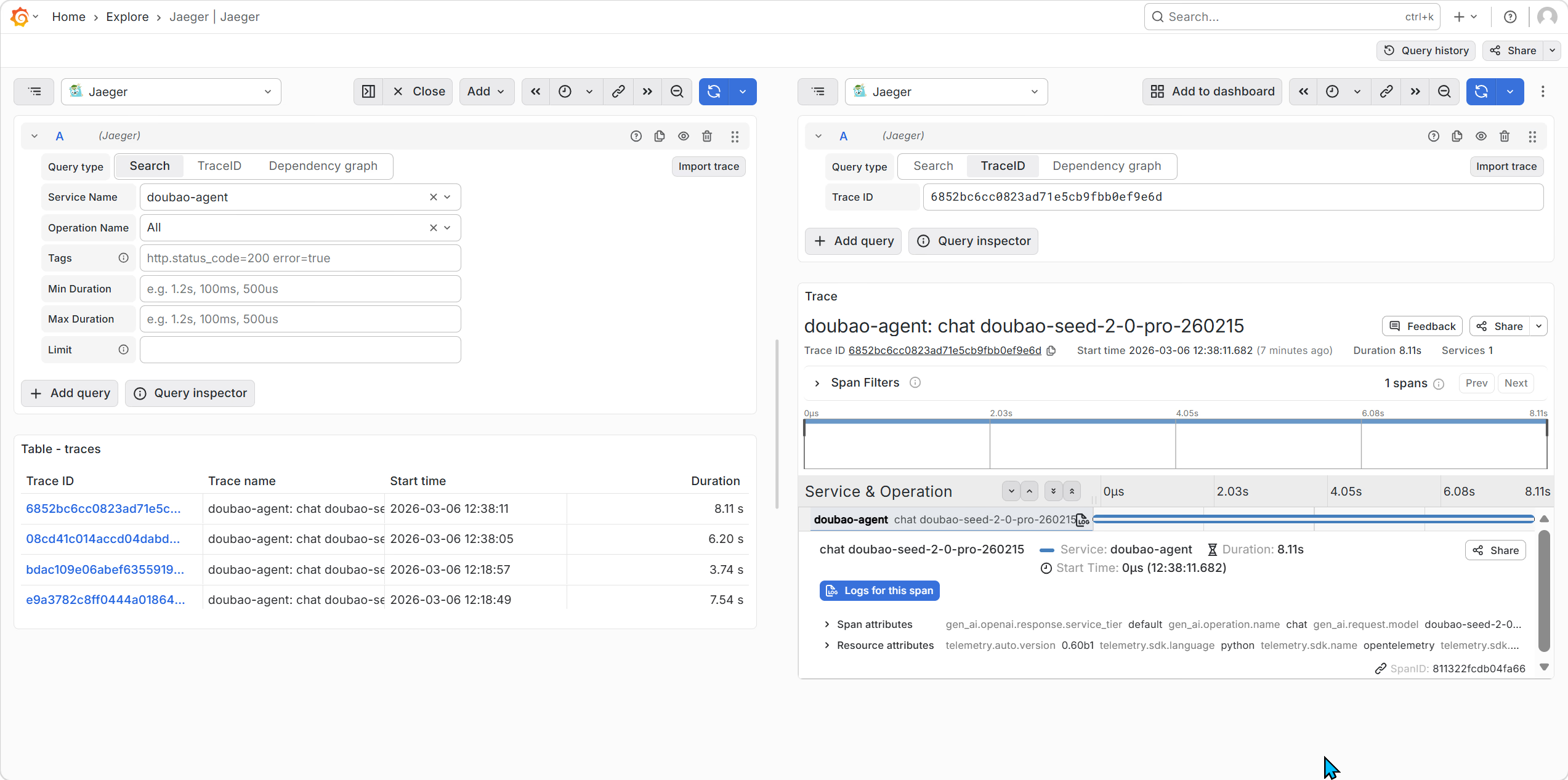

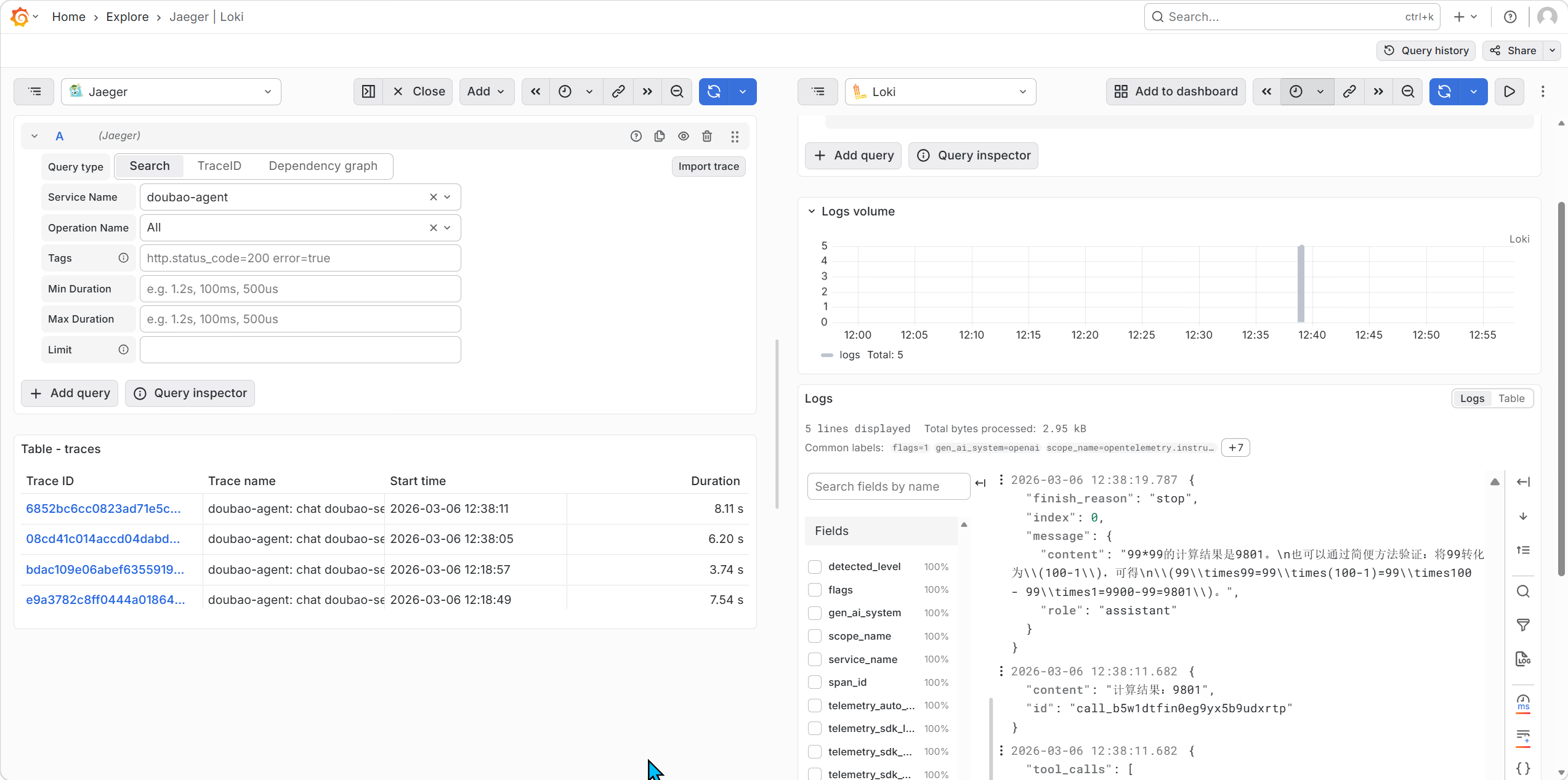

确保Jaeger和Loki正常工作

Jager:

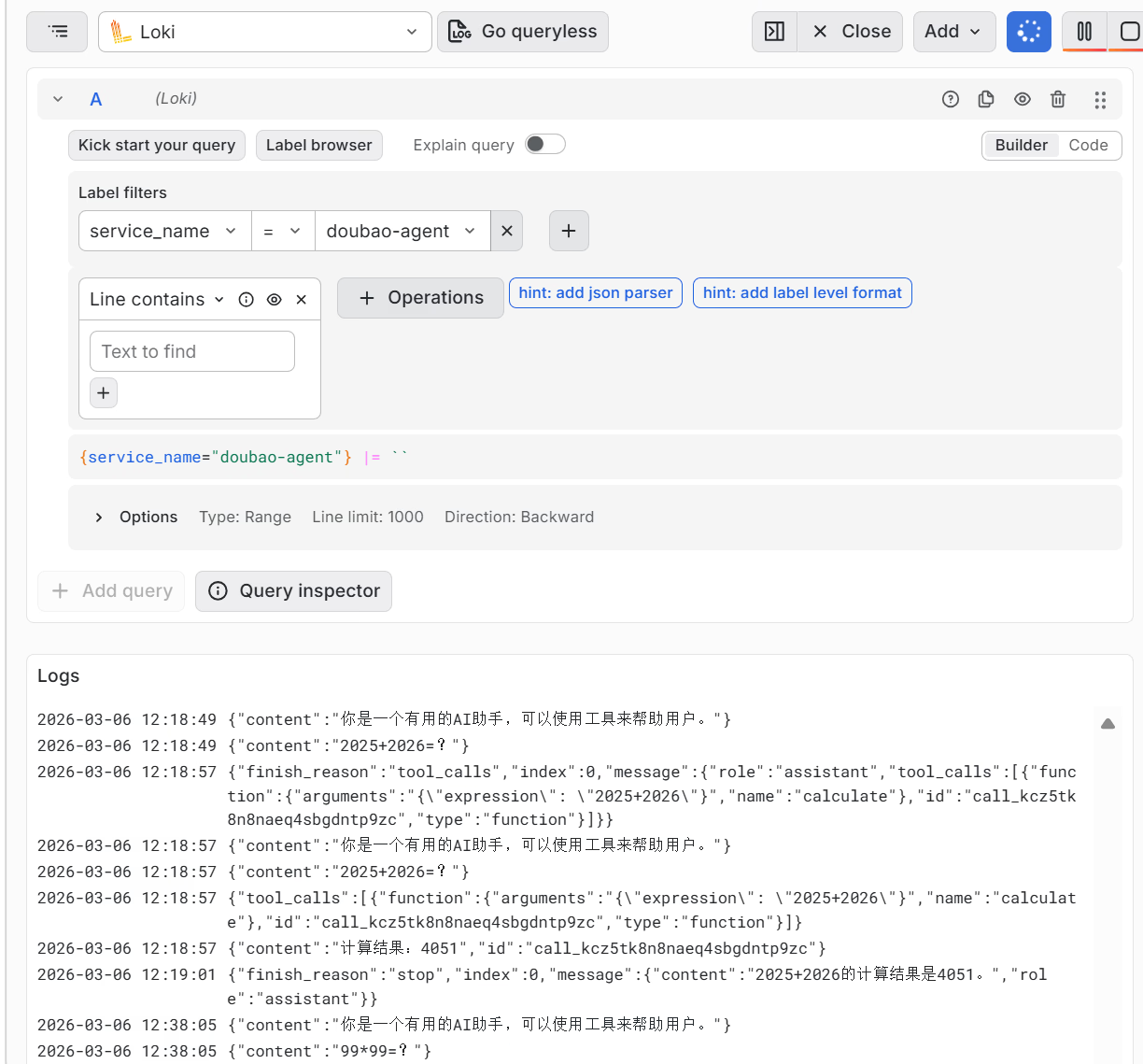

Loki:

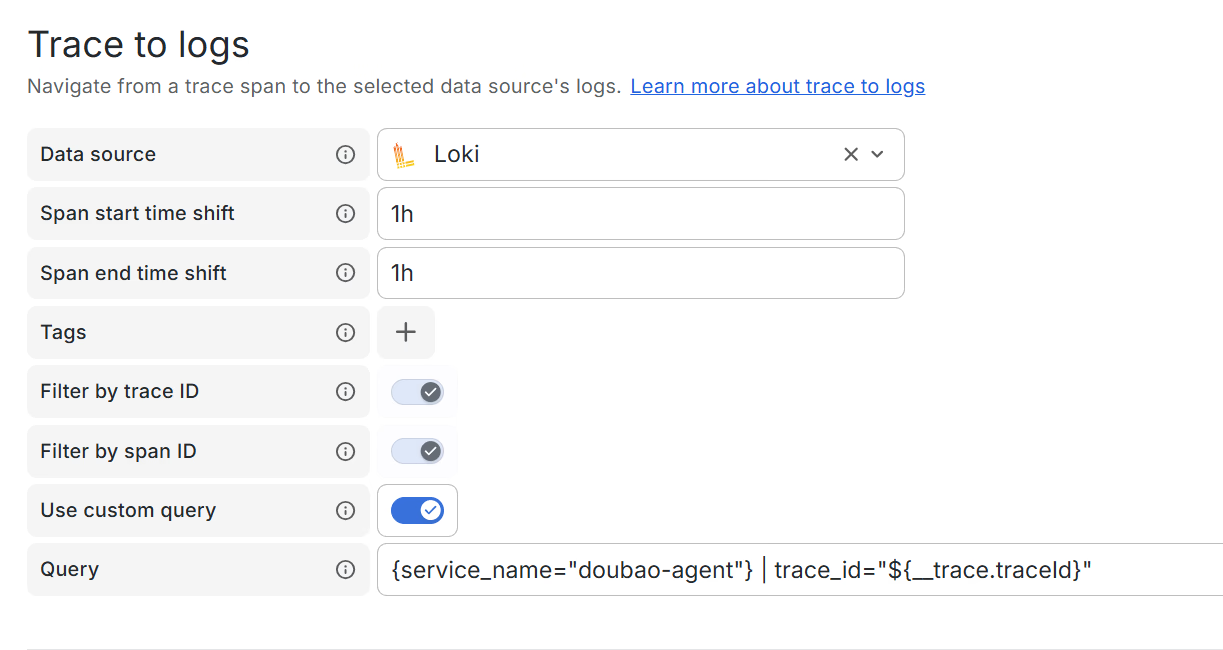

为Jaeger数据源设置Trace to Logs功能

登录Grafana平台,在Jaeger的数据源设置页面中配置Trace to logs功能:

(http://HOST_ADDR:3000/connections/datasources/edit/jaeger)

常规应该是配置Tags:

- 从Traces的数据源(这里是Jaeger)选取一个字段,比如service.name

- 再从Logs的数据源选择应该对应的字段(这里是Loki),比如service_name

- 若这两个值相同,便可以靠查询关联起来

https://grafana.com/docs/grafana/next/datasources/jaeger/#use-a-simple-configuration The tags you configure must be present in the spans attributes or resources for a trace to logs span link to appear.

但我这里遇到一个问题,应用发出的遥测中,无论是resource attributes还是span attributes中都不包含service.name这个字段。

奇怪了,那为什么之前的Jaeger面板里有doubao-agent这个名字呢? 查看了jaeger的原始数据后恍然大悟,process中有service.name这个字段。

"processes": {

"p1": {

"serviceName": "doubao-agent",

"tags": [

{

"key": "telemetry.auto.version",

"type": "string",

"value": "0.60b1"

},

{

"key": "telemetry.sdk.language",

"type": "string",

"value": "python"

},

{

"key": "telemetry.sdk.name",

"type": "string",

"value": "opentelemetry"

},

{

"key": "telemetry.sdk.version",

"type": "string",

"value": "1.39.1"

}

]

}

}最后的解决方案是在custom query里硬编码:

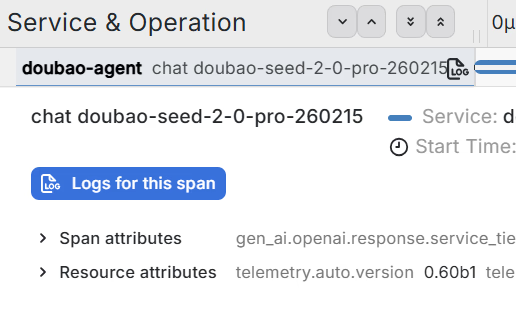

{service_name="doubao-agent"} | trace_id="${__trace.traceId}"成功的标志是出现Logs for this span按钮:

点击这个按钮后就会跳转到对应的Loki界面。

另一个坑是Loki查询界面的时间可能不对,需要点击🕰标志并将时间设置为last 1 hour(具体问题具体分析,Log产生的时间和Trace可能会有时间差)。

跳转后的效果如下:

但是这个效果和我想要的并不一样,这是跳转,而非将Log作为Trace的一个span进行展示。

使用Tempo替换Jaeger

Kimi建议我使用Tempo替换Jaeger,说这样可以将Logs内容作为Span进行展示。 替换并不顺利。

OpenClaw的Aha Moment

研究进展到现在,一个新的问题是,大模型的消息到底应该写到哪里? 是Traces Span Attributes中,还是Logs里?

和AI讨论中,我觉得写到Logs里似乎更合理,因为大模型的消息可能会很大,这样的文本消息适合放到Logs里。

我发现Grafana有一个AI Assisstant,这个助手接入了他们的知识库。对于我的提问,这个助手的回答有理有据。

There isn’t a single “official” best practice in the sources, but they do describe the trade‑offs and how Grafana and OpenTelemetry users are approaching this.

1. What traces vs. logs are for (in LLM/agent apps)

For LLM / agent workloads, traces are emphasized as the primary signal to understand the sequence of events (which tools/agents ran, whether a vector DB was used instead of an LLM call, etc.). [LLM guide; Office Hours 29]

Traces: “most prominent” signal for LLM apps, especially RAG and orchestration frameworks like LangChain, because they show the flow and which calls happened. [Office Hours 29]

Metrics: for aggregates (latency, cost, tokens, request volume). [LLM guide]

Logs: for detailed, often human‑oriented records and evaluation results, correlated to traces via trace/span IDs. [K8s logging call; Game of Traces]

2. What the OTel / Grafana folks recommend for LLM messages

The OpenTelemetry generative‑AI work (described in the community call) explicitly addresses where to put prompts/responses and similar content:

For early‑stage / baby applications, they suggest recording LLM input/output as span attributes (using the gen_ai.* semantic conventions). [GenAI community call]

At the same time, they acknowledge:

Text can be stored as span attributes, but binary or large content (images, audio, big messages) is problematic for traces. [GenAI community call]

There are cost and privacy concerns with storing full conversations in the telemetry backend; they propose a pattern where you:

keep a small, structured representation in span attributes, and

optionally upload full content to cold storage (e.g., S3) and store only a reference in telemetry. [GenAI community call]

Grafana Assistant itself follows a similar split: traces/logs/metrics in the observability stack, but conversations and trajectories are stored separately and linked, not fully in Tempo. [GenAI community call]

Also, in practice:

Many users capture prompts/responses directly in traces (as attributes) so they appear in the trace view and show the sequence of LLM/tool calls. [Office Hours 29]

Logs are recommended for individual evaluation results with reasoning, correlated to the trace via IDs. [GenAI community call]

3. How this maps to your options

Given your goal (“see agent thinking / LLM interaction steps directly in the trace view”), the sources point to a hybrid that is closer to Option B, with guardrails:

Use traces as the primary place to model the agent steps, and put enough message data on spans to understand the flow.

Concretely:

Model each LLM/tool/agent step as its own span (using the OTel gen‑AI semantic conventions), so the trace tree itself shows the orchestration. [LLM guide; GenAI community call]

On those spans, store:

Request/response metadata (model, temperature, top_p, token counts, cost, etc.). [LLM guide]

A truncated or redacted version of prompts/responses/reasoning that’s enough to debug and understand the step. [GenAI community call]

For full, verbose content:

Either store it in separate storage (or an LLM‑specific store) and keep a reference (URL, ID) on the span, as suggested in the OTel GenAI work. [GenAI community call]

Or, if you prefer Loki, emit logs with the full messages, correlated via trace/span IDs, and link traces↔logs in Grafana (Tempo+Loki correlation). [Game of Traces; Tempo announcement]

This gives you:

In Tempo’s trace view: the agent flow plus key prompt/response snippets and metadata (your main goal).

In Loki (or external storage): full conversations, reasoning, and eval logs when you need deep forensics or offline analysis.

4. Summary vs. your options

Option B (all messages in traces)

Matches the recommended starting point for small/early systems, but becomes expensive and risky (privacy, volume) if you store full conversations unbounded. [GenAI community call]

Option A (only logs, traces just link)

You lose the “LangSmith‑like” experience where the trace itself shows the agent’s thinking; the sources emphasize traces as the primary signal for LLM workflows. [Office Hours 29]

Best‑fit based on the docs:

Use traces as the canonical view of agent steps with summarized messages (Option B‑ish), and logs or external storage for full content and evals (Option A‑ish), all correlated via OpenTelemetry IDs. This is exactly the pattern the OTel GenAI work and Grafana teams describe. [GenAI community call]对于早期的agent项目来说,可以把大模型的消息写到span attributes里,但是后续可能面临的问题是大模型的消息越来越大导致存储空间问题、网络消耗问题以及随之而来的隐私安全问题。

大家是怎么做这件事的?

为了进一步确认这件事的实现方式,我觉得有必要调研一下目前大家是怎么做这件事的。

方法很简单,将插桩库clone到本地,看看大家将大模型的消息写到哪里了。

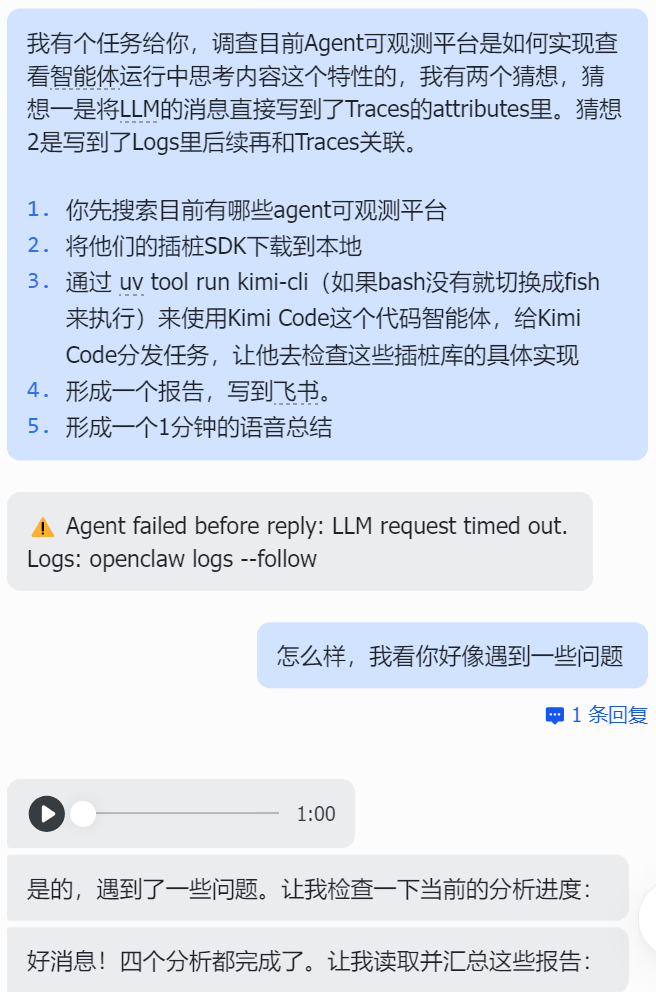

我给我的虾仔布置了这个任务:

我有个任务给你,调查目前Agent可观测平台是如何实现查看智能体运行中思考内容这个特性的,我有两个猜想,猜想一是将LLM的消息直接写到了Traces的attributes里。猜想2是写到了Logs里后续再和Traces关联。

1. 你先搜索目前有哪些agent可观测平台

2. 将他们的插桩SDK下载到本地

3. 通过 uv tool run kimi-cli(如果bash没有就切换成fish来执行)来使用Kimi Code这个代码智能体,给Kimi Code分发任务,让他去检查这些插桩库的具体实现

4. 形成一个报告,写到飞书。

5. 形成一个1分钟的语音总结过了十几分钟,虾仔给我返回了一个错误代码。我的预期也是,这个任务可能有点复杂,虾仔在执行的时候遇到问题很正常。

让我惊喜的是,分析都完成了,还生成了一分钟的语音总结。

这是我经历的第三次 Aha Moment,第一次是用ChatGPT改前端代码,第二次是和Cursor(Claude)用Rust重写OTel Collector取得阶段性成果的时候。

Todo APP是AI的杀手应用

从23年的第一份工作起,我开始了任务管理,我用WPS的表格搭建了任务管理系统,可以追踪一个任务的状态、DDL等内容,还可以做出炫酷的看板。

渐渐的,我发觉,光是操作任务管理软件,就花费了一些能意识到的精力和时间。

在见识到AI的能力后,我想,AI可以解决这个问题。

我设想的Todo APP的终极形态是AI可以凭借上下文和对环境的感知,自动操作任务管理软件,无需用户过度交互。

在26年的今天,我让虾仔创建任务管理表格,并将今天完成的任务,以及明天要做的事情都放进去。

他很快就完成了,和飞书的集成异常丝滑。